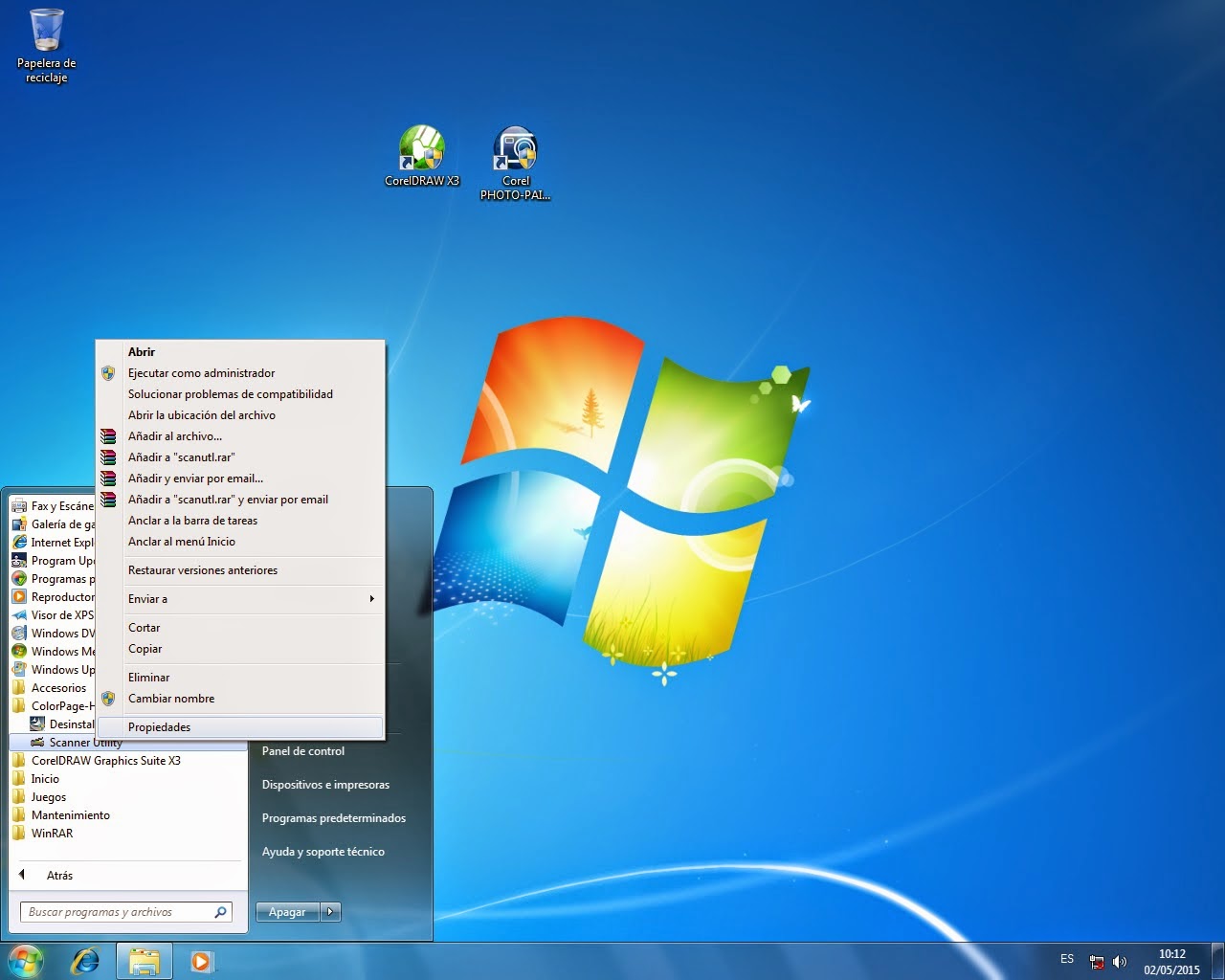

Normally, the Windows computer will automatically recognize or update the drivers needed for the device as soon as you plug it in on the USB port. But sometimes there are issues on Windows not automatically updating the drivers. If this happens, you will need to manually update the drivers by following these steps:

1. Download the device drivers to your computer from: https://www.ftdichip.com/Drivers/VCP.htm

Select the latest Windows release. Choose between 64-bit or 32-bit, depending on the version of your Windows operating system. Most computers running the latest Windows 10 versions are 64-bit.

Direct link: https://www.ftdichip.com/Drivers/CDM/CDM21228_Setup.zip

2. You will be downloading a self-extracting archive file. When launched, it will extract an installer file to a specified folder but it DOES NOT install the device drivers yet.

3. Navigate to target folder and launch CDM21228_Setup.exe to install device drivers.

4. Click through, all default values are fine.

5. Plug the device into the USB port and Windows will take over the rest of the setup process. The set up process will install two new devices in your computer: the USB Serial Port and the USB Serial Converter.

Now all device drivers are set up and good to go.

6. Run FORScan and setup the FORScan recommended connection settings:

------------------------------------------------------------

II - OHP WiFi Device Installation Guide:

This manual will show you how to install the OHP ELM327 OBD2 WiFi device and connect it to your vehicle using desktop/laptop/tablet Windows computer or via iPhone/Android phone.

II.a - For Desktop/Laptop/Tablet Windows computers

1. Plug the device into your vehicle's OBDII port.

2. Check for new WiFi networks, then connect to WiFi OBDII network.

3. Download the FORScan software here: https://forscan.org/download.html. Then install in your computer.

4. Open the FORScan software.

5. Go to Settings and select WiFi under the Connection tab. Make sure to check the Auto-connect box.

6. All set. You are good to go. If you are having issues connecting the device, please email our customer support at support@ohptools.com.

Vpi Driver Download App

II.b - For iPhones and Android Phones

1. Plug the device into your OBDII port.

2. Open Phone settings, check for new Wifi networks, then connect to WiFi OBDII network.

3. Download and install the FORScan Lite phone app. Download the app at Google Play Store for Android users and at the App Store for iOS users.

4. Open FORScan Lite phone app.

5. Using the FORScan Lite phone app, push connect to the vehicle.

6. All set. You are good to go. If you are having issues connecting the device, please email our customer support at support@ohptools.com.

Vpi Driver Download Windows 10

*Note: You CANNOT apply configurations, programming functions, and some service procedures using FORScan Lite. Use the FORScan software instead using Windows laptop, desktop computer, or tablet if you wish to do so.

------------------------------------------------------------

III - OHP Bluetooth Device Installation Guide

There are two ways to use and connect the OHP OBD2 Bluetooth device to your vehicle, one is to connect it via desktop/laptop/tablet Windows computer and the other one is via Android phone. Below are the steps on how to install the device:

III.a - Via Desktop/Laptop/Tablet Windows Computers

1. Plug the device into your vehicle's OBDII port.

2. Turn on the Bluetooth on your desktop/laptop/tablet Windows computer. Search and connect to available Bluetooth signal from the OBD2 device.

3. Download the FORScan software here: https://forscan.org/download.html. Then install it on your computer.

4. Open the FORScan software.

5. Go to Settings and select Bluetooth under the Connection tab. Make sure to check the Auto-connect box.

6. All set. You are good to go.

III.b - Android Phones

1. Plug the device into your OBD2 port.

2. Open Phone settings, turn on Bluetooth and check and connect to available Bluetooth network from the OBD2 device.

3. Download and install the FORScan Lite phone app. Download the app at Google Play Store.

4. Open FORScan Lite phone app.

5. Using the FORScan Lite phone app, push connect to the vehicle.

6. All set. You are good to go.

*Note: You CANNOT apply configurations, programming functions, and some service procedures using FORScan Lite. Use the FORScan software instead of using Windows laptop, desktop computer, or tablet if you wish to do so.

------------------------------------------------------------

IV - FORScan Software and Phone App:

http://forscan.org/download.html

You can download the FORScan software for Windows and FORScan app for iOs and Android on this page. Remember to check compatibility before purchasing it.

------------------------------------------------------------

V - How to Obtain FORScan Extended License

1. Register for FORScan Forum and wait until you are accepted (may take a couple of hours depending on time zones): https://forscan.org/forum/

2. Once accepted, log in with your username and password.

3. Generate a FORScan Extended License. Link to license generator is here.

4. The license generator will take you here:

5. Fill in the blanks, You will be requested a hardware ID. This is your computer ID as identified by FORScan. So, launch FORScan and find your ID as shown. Copy and paste to the web browser. Generate:

6. Success! Please download License file to your computer. We suggest you download it to My Documents or Desktop. Reason for this is that Windows security is quite tight lately and you may have a hard time accessing this file later when you saved it to the default location in Systems folder. The license will still be available in FORScan Forum, you can download it again any time you want.

VPI Driver Download

7. Load License Key into the FORScan software. Note: You need to be connected to the Internet when you load a new license key.

Vpi Driver Download Software

8. SUCCESS! FORScan License is now Extended.

------------------------------------------------------------

VI - FORScan Forum:

http://forscan.org/forum

The official FORScan forum offers general information and support. Exchange ideas with other users on vehicle diagnostics, programming, configurations, and maintenance on this forum.

Customer support by e-mail: support@ohptools.com

[Back to Top]

* RECOMMENDED * Mellanox InfiniBand and Ethernet Driver for Microsoft Windows Server 2019 By downloading, you agree to the terms and conditions of the Hewlett Packard Enterprise Software License Agreement.

Note: Some software requires a valid warranty, current Hewlett Packard Enterprise support contract, or a license fee. | Type: | Driver - Network | | Version: | 5.50.52000(14 May 2019) | | Operating System(s): | Microsoft Windows Server 2019 | | File name: | MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.zip (50 MB) |

InfiniBand and Ethernet driver for use with Microsoft Windows Server 2019 New Features in Version 5.50.52000: - The package contains the following versions of components:

- Bus, eth, IPoIB and MUX drivers version is 5.50.14688.

- The CIM Provider version is 5.50.14688.

To ensure the integrity of your download, HPE recommends verifying your results with this SHA-256 Checksum value: | 6edbe263a499906f51d5be5d838b3ac1345348af759f01127b22caaf2fd4dad0 | MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.zip |

Reboot Requirement:

Reboot is required after installation for updates to take effect and hardware stability to be maintained. Installation:

Steps for Installing Mellanox VPI driver version 5.50: - Download 'MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.zip' on to the node.

- Extract contents of the zip file.

- Double click 'MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.exe' and follow the instructions for installation as explained below:

- Click 'NEXT' on the Welcome screen.

- Accept license agreement and click 'NEXT'.

- Accept default location 'C:Program FilesMellanoxMLNX_VPI' and click 'NEXT'.

- Check the box 'Configure your system for maximum performance' and click 'NEXT'.

- Select setup type 'Complete' and click 'Next'.

- Click 'Install'.

- Once the installation completes, click 'Finish'.

- Restart the node.

End User License Agreements:

HPE Software License Agreement v1

Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. Important:

Beta Features:

The following features are currently at the beta level: - 'ibdump'

- IPv6 support of IPoIB (IP Over InfiniBand) in an SR-IOV (Single root input/output virtualization) guest OS over KVM (Kernel-based Virtual Machine).

- IPoIB teaming support is at beta level and it is supported only on native machine (and not in HyperV or SR-IOV).

Unsupported features:

The following are the unsupported functionality/features in WinOF Rev 5.50: - Wake-On-Lan.

- Software RSC (Recieve Segment Coalescing) for tunneled traffic

- RDMA in the Guest OSes.

- ND over virtual switch attached to IPoIB port.

- Memory Translation Table (MTT) Optimization.

Certain software including drivers and documents may be available from Mellanox. If you select a URL that directs you to http://www.mellanox.com/, you are then leaving HPE.com. Please follow the instructions on http://www.mellanox.com/ to download Mellanox software or documentation. When downloading the Mellanox software or documentation, you may be subject to Mellanox terms and conditions, including licensing terms, if any, provided on its website or otherwise. HPE is not responsible for your use of any software or documents that you download from http://www.mellanox.com/, except that HPE may provide a limited warranty for Mellanox software in accordance with the terms and conditions of your purchase of the HPE product or solution. - For a list of known issues with this release, refer to Chapter 1 (Release Notes), subsection 1.8 of 'Mellanox WinOF VPI Documentation' bundled with the driver download.

- Performance tuning guide can be obtained via the following download page:

- Topology guide can be obtained via the following download page:

- Mellanox InfiniBand configurator can be obtained via the following download page:

Notes:

Mellanox WinOF VPI Documentation containing the 'Release Notes' and 'User Manual' are bundled along with the driver download. Supported Devices and Features:

Supported Network Adapter cards: - ConnectX-3 Pro InfiniBand (SDR/DDR/QDR/FDR10/FDR) and ConnectX-3 Pro Ethernet (10, 40, 50 and 56 Gb/s).

- ConnectX-3 InfiniBand (SDR/DDR/QDR/FDR10/FDR) and ConnectX-3 Ethernet (10, 40, 50 and 56 Gb/s).

Supported Firmware Versions: | NICs | Recommended Firmware version | Additional Firmware Supported | | ConnectX-3 Pro / ConnectX-3 Pro EN | 2.42.5044 | 2.42.5004 | | ConnectX-3 / ConnectX-3 EN | 2.42.5044 | 2.42.5004 |

Note : Firmware version 2.40.5000 requires upgrade to version 2.40.5032 and above, before installing driver version 5.50 and above. Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.52000: - An issue that resulted in a BSOD (Blue Screen Of Death) when changed the number of queues/CQ in VMMQ (Virtual Machine Multi-Queue).

- System crash occured while updating the VPort in the error flow.

- A race condition that occurred when sending RDMA-send-messages between the storage nodes and the compute nodes which resulted in RDMA (Remote Direct Memory Access) connectivity loss.

- Fixed an issue that prevented the NIC from enabling NVGRE (Network Virtualization using Generic Routing Encapsulation) or VXLAN (Virtual Extensible LAN) although they were enabled by the user.

- Fixed a BSOD issue that occasionally occurred when used a machine with more than 128 cores.

- The systems_snapshot tool used to hung when the ETL(Extract, Transform,Load) folder was not present.

- VMMQ: Fixed an issue that resulted in a BSOD due to an error when changing the number of queues/CQ.

- A race condition that occurred when simultaneously querying the Permon counters (the 'Mellanox Adapter Traffic Counters' and the 'Mellanox Adapter QoS Counters') and deleting the vPort OID, which resulted in BSOD.

- A rare issue that caused a deadlock between delete vPort and CheckForHang Routine.

Beta Features:

The following features are currently at the beta level: - 'ibdump'

- IPv6 support of IPoIB (IP Over InfiniBand) in an SR-IOV (Single root input/output virtualization) guest OS over KVM (Kernel-based Virtual Machine).

- IPoIB teaming support is at beta level and it is supported only on native machine (and not in HyperV or SR-IOV).

Unsupported features:

The following are the unsupported functionality/features in WinOF Rev 5.50: - Wake-On-Lan.

- Software RSC (Recieve Segment Coalescing) for tunneled traffic

- RDMA in the Guest OSes.

- ND over virtual switch attached to IPoIB port.

- Memory Translation Table (MTT) Optimization.

Certain software including drivers and documents may be available from Mellanox. If you select a URL that directs you to http://www.mellanox.com/, you are then leaving HPE.com. Please follow the instructions on http://www.mellanox.com/ to download Mellanox software or documentation. When downloading the Mellanox software or documentation, you may be subject to Mellanox terms and conditions, including licensing terms, if any, provided on its website or otherwise. HPE is not responsible for your use of any software or documents that you download from http://www.mellanox.com/, except that HPE may provide a limited warranty for Mellanox software in accordance with the terms and conditions of your purchase of the HPE product or solution. - For a list of known issues with this release, refer to Chapter 1 (Release Notes), subsection 1.8 of 'Mellanox WinOF VPI Documentation' bundled with the driver download.

- Performance tuning guide can be obtained via the following download page:

- Topology guide can be obtained via the following download page:

- Mellanox InfiniBand configurator can be obtained via the following download page:

Version:5.50.52000 (14 May 2019) Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.52000: - An issue that resulted in a BSOD (Blue Screen Of Death) when changed the number of queues/CQ in VMMQ (Virtual Machine Multi-Queue).

- System crash occured while updating the VPort in the error flow.

- A race condition that occurred when sending RDMA-send-messages between the storage nodes and the compute nodes which resulted in RDMA (Remote Direct Memory Access) connectivity loss.

- Fixed an issue that prevented the NIC from enabling NVGRE (Network Virtualization using Generic Routing Encapsulation) or VXLAN (Virtual Extensible LAN) although they were enabled by the user.

- Fixed a BSOD issue that occasionally occurred when used a machine with more than 128 cores.

- The systems_snapshot tool used to hung when the ETL(Extract, Transform,Load) folder was not present.

- VMMQ: Fixed an issue that resulted in a BSOD due to an error when changing the number of queues/CQ.

- A race condition that occurred when simultaneously querying the Permon counters (the 'Mellanox Adapter Traffic Counters' and the 'Mellanox Adapter QoS Counters') and deleting the vPort OID, which resulted in BSOD.

- A rare issue that caused a deadlock between delete vPort and CheckForHang Routine.

New Features in Version 5.50.52000: - The package contains the following versions of components:

- Bus, eth, IPoIB and MUX drivers version is 5.50.14688.

- The CIM Provider version is 5.50.14688.

(19 Dec 2018) Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.50010: - System would cease functioning when the vNic was detached from the VM during heavy traffic when in VMQVMMQ mode.

- RoCE (RDMA Over Converged Ethernet) connection failed occasionally when the Universal/Local (U/L) bit in the MAC was set to 1.

- Fixed an issue that caused the mlxtool PDDR (Port Diagnostic Database Register) tool to provide some inaccurate information for Infiniband links.

- Disabled the option to stop the uninstall process once the driver uninstallation process started.

- Networks with new Subnet Managers (OpenSM 4.7.0 and up) would drop malformed multicast-join packets issued by the driver. The driver now constructs the multicast join request correctly.

- In case the DSCP (Differentiated Service Code Point) values are lower than the max priority i.e: DSCP(4)- >Prio(0) when mapping the DSCP to a certain priority, the priority's value will be set the same as the DSCP's value.

- Driver would not load due to a race condition that existed between the resiliency flow and the FLR request when issuing an OID_SRIOV_RESET_VF request to reset a specified PCI Express (PCIe) Virtual Function (VF).

- The driver would reset the adapter as a result of a false alarm indicating that a receive queue was not processing.

- Fixed an issue that caused a Black Screen upon driver's removal due to extremely low memory conditions, when the memory allocations started to fail.

- The Memory Region (MR) was displayed as registered when it was not, thus prevented the user from accessing it. This incorrect status display of MR was a result of the ND function 'INDEndpoint' reporting error status when it returned from the underlying functions. This fix verifies that the user will receive the correct error status upon such scenario.

- MSI-X cores in Virtual Function was limited 8. This limit has been expanded to 128.

- BSOD (Blue Screen Of Death) occurred on servers with more than 64 cores because the Tx traffic did not honor the Tx affinity implied by the TSS, when the number of potential RSS CPUs was greater than 64.

- When the mlxtool dbg resources command executed, the FS_RULE quota number displayed instead of the 'Managed by PF' message.

- When setting the LogNumQp and LogNumRdmaRc registry settings to their maximum value, the WinOF bus driver failed to load.

- The 'TX Ring Is Full Packets' perfmon counter did not function properly on IPoIB.

- When installing the driver over Microsoft Windows Server 2012 R2 inbox driver, the LogNumQP parameter remained in the registry. Thus, a number of QPs were limited to 64K instead of 512K (the driver's default).

- Fixed an issue that caused a system crash when the interface connected to vSwitch was disabled and the operating system did not clean all VMQs(Virtual Machine Queue).

- Communication Manager would stop functioning while attempting to obtain ND/NDK (Network Direct Kernel) connection.

- Command failure and protection domain violation occurred when running the ND application.

- Mlxtool command, 'mlxtool dbg ipoib-ep []', reported partial results of the EndPoint list when there was a large number of endpoints.

- VM would stop functioning when restarting the PF drivers and their peers in the target machine. The VM had to be force-restarted to restore the functionality.

- Fixed an issue that caused a memory leak when RoCE was enabled.

- Set a wrong value to the *ReceiveBuffers key when it was restored to default.

- Fixed a crash that occurred when changing the Ethernet IP address while RDMA traffic was running.

- Fixed a crash that occurred on IPoIB driver stack.

- A BSOD that occurred when a memory allocation failed upon driver startup.

- The connection port numbers did not increase sequentially when running nd_*_bw application with multiple QPs.

- Tx traffic did not honor the Tx affinity implied by the TSS when the number of potential RSS CPUs was greater than 64.

- When the driver was installed over Microsoft Windows Server 2012 R2 inbox driver, the number of QPs was limited to 64K instead of 512K (the driver's default) because the LogNumQP parameter remained in the registry.

- Removing a PKey that was a part of an IPoIB team interface disabled the team and the option to delete it.

- An incorrect number of HCAs was returned from executing the Get-MlnxPCIDeviceSriovSetting command.

- The mlxtool dbg resources command failed to pull information about the last VF, and showed the PF as VF0.

- Using invalid parameters in mlxtool perfstat command resulted in infinite waiting time and results were not returned.

- The Get-MlnxPCIDeviceSriovSetting command failed on a server with more than one device, when one of the devices was disabled. Following the fix, the command returned results only for the devices that were enabled.

Changes and New Features in Version 5.50.50010: - Dump Me Now (DMN), a bus driver (mlx4_bus.sys) feature that generates dumps and traces from various components, including hardware, firmware and software, upon internally detected issues (by the resiliency sensors), user requests (mlxtool) or ND application requests via the extended Mellanox ND API. DMN is unsupported on VFs.

- Support for systems with up to 252 logical processors when Hyperthreading is enabled and up to 126 logical processors when Hyperthreading is disabled.

- RSC (Receive Segmnet Coalescing) solution in TCP/IP traffic to reduce CPU overhead.

- NDSPI to control CQ (Completion Queue) moderation.

- A new counter for packets with no destination resource.

- A new registry key that allows users to configure the E2E Congestion Control feature.

- Added to the vlan_config tool the ability to create VLANs for the Physical Function (PF) in addition to the Virtual Function (VF).

- Added support for VMQ(Virtual Machine Queue) over IPoIB in Windows Server 2016.

- Ability to collect firmware MST dumps in cases of system bug check.

- Added an event log message (ID 273) that is printed when the number of resources to load the VF is insufficient.

- A counter for the number of packets discarded due to an invalid QP (Queue Pair) number.

- DSCP(Differentiated Service Code Point) based counters to support traffic where no VLAN/priority is present.

- Added support for servers with more than 64 cores.

- Added support for Windows Server 2019.

- Modified the RSC (Receive Segment Coalescing) default mode when using Windows Server 2019. RSC is disabled by default in Windows Server 2019.

| Type: | Driver - Network | | Version: | 5.50.52000(14 May 2019) | | Operating System(s): | | Microsoft Windows Server 2019 |

|

DescriptionInfiniBand and Ethernet driver for use with Microsoft Windows Server 2019 EnhancementsNew Features in Version 5.50.52000: - The package contains the following versions of components:

- Bus, eth, IPoIB and MUX drivers version is 5.50.14688.

- The CIM Provider version is 5.50.14688.

Installation InstructionsTo ensure the integrity of your download, HPE recommends verifying your results with this SHA-256 Checksum value: | 6edbe263a499906f51d5be5d838b3ac1345348af759f01127b22caaf2fd4dad0 | MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.zip |

Reboot Requirement:

Reboot is required after installation for updates to take effect and hardware stability to be maintained. Installation:

Steps for Installing Mellanox VPI driver version 5.50: - Download 'MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.zip' on to the node.

- Extract contents of the zip file.

- Double click 'MLNX_VPI_WinOF-5_50_52000_All_Win2019_x64.exe' and follow the instructions for installation as explained below:

- Click 'NEXT' on the Welcome screen.

- Accept license agreement and click 'NEXT'.

- Accept default location 'C:Program FilesMellanoxMLNX_VPI' and click 'NEXT'.

- Check the box 'Configure your system for maximum performance' and click 'NEXT'.

- Select setup type 'Complete' and click 'Next'.

- Click 'Install'.

- Once the installation completes, click 'Finish'.

- Restart the node.

Release NotesEnd User License Agreements:

HPE Software License Agreement v1

Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. Important:

Beta Features:

The following features are currently at the beta level: - 'ibdump'

- IPv6 support of IPoIB (IP Over InfiniBand) in an SR-IOV (Single root input/output virtualization) guest OS over KVM (Kernel-based Virtual Machine).

- IPoIB teaming support is at beta level and it is supported only on native machine (and not in HyperV or SR-IOV).

Unsupported features:

The following are the unsupported functionality/features in WinOF Rev 5.50: - Wake-On-Lan.

- Software RSC (Recieve Segment Coalescing) for tunneled traffic

- RDMA in the Guest OSes.

- ND over virtual switch attached to IPoIB port.

- Memory Translation Table (MTT) Optimization.

Certain software including drivers and documents may be available from Mellanox. If you select a URL that directs you to http://www.mellanox.com/, you are then leaving HPE.com. Please follow the instructions on http://www.mellanox.com/ to download Mellanox software or documentation. When downloading the Mellanox software or documentation, you may be subject to Mellanox terms and conditions, including licensing terms, if any, provided on its website or otherwise. HPE is not responsible for your use of any software or documents that you download from http://www.mellanox.com/, except that HPE may provide a limited warranty for Mellanox software in accordance with the terms and conditions of your purchase of the HPE product or solution. - For a list of known issues with this release, refer to Chapter 1 (Release Notes), subsection 1.8 of 'Mellanox WinOF VPI Documentation' bundled with the driver download.

- Performance tuning guide can be obtained via the following download page:

- Topology guide can be obtained via the following download page:

- Mellanox InfiniBand configurator can be obtained via the following download page:

Notes:

Mellanox WinOF VPI Documentation containing the 'Release Notes' and 'User Manual' are bundled along with the driver download. Supported Devices and Features:

Supported Network Adapter cards: - ConnectX-3 Pro InfiniBand (SDR/DDR/QDR/FDR10/FDR) and ConnectX-3 Pro Ethernet (10, 40, 50 and 56 Gb/s).

- ConnectX-3 InfiniBand (SDR/DDR/QDR/FDR10/FDR) and ConnectX-3 Ethernet (10, 40, 50 and 56 Gb/s).

Supported Firmware Versions: | NICs | Recommended Firmware version | Additional Firmware Supported | | ConnectX-3 Pro / ConnectX-3 Pro EN | 2.42.5044 | 2.42.5004 | | ConnectX-3 / ConnectX-3 EN | 2.42.5044 | 2.42.5004 |

Note : Firmware version 2.40.5000 requires upgrade to version 2.40.5032 and above, before installing driver version 5.50 and above. FixesUpgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.52000: - An issue that resulted in a BSOD (Blue Screen Of Death) when changed the number of queues/CQ in VMMQ (Virtual Machine Multi-Queue).

- System crash occured while updating the VPort in the error flow.

- A race condition that occurred when sending RDMA-send-messages between the storage nodes and the compute nodes which resulted in RDMA (Remote Direct Memory Access) connectivity loss.

- Fixed an issue that prevented the NIC from enabling NVGRE (Network Virtualization using Generic Routing Encapsulation) or VXLAN (Virtual Extensible LAN) although they were enabled by the user.

- Fixed a BSOD issue that occasionally occurred when used a machine with more than 128 cores.

- The systems_snapshot tool used to hung when the ETL(Extract, Transform,Load) folder was not present.

- VMMQ: Fixed an issue that resulted in a BSOD due to an error when changing the number of queues/CQ.

- A race condition that occurred when simultaneously querying the Permon counters (the 'Mellanox Adapter Traffic Counters' and the 'Mellanox Adapter QoS Counters') and deleting the vPort OID, which resulted in BSOD.

- A rare issue that caused a deadlock between delete vPort and CheckForHang Routine.

ImportantBeta Features:

The following features are currently at the beta level: - 'ibdump'

- IPv6 support of IPoIB (IP Over InfiniBand) in an SR-IOV (Single root input/output virtualization) guest OS over KVM (Kernel-based Virtual Machine).

- IPoIB teaming support is at beta level and it is supported only on native machine (and not in HyperV or SR-IOV).

Unsupported features:

The following are the unsupported functionality/features in WinOF Rev 5.50: - Wake-On-Lan.

- Software RSC (Recieve Segment Coalescing) for tunneled traffic

- RDMA in the Guest OSes.

- ND over virtual switch attached to IPoIB port.

- Memory Translation Table (MTT) Optimization.

Certain software including drivers and documents may be available from Mellanox. If you select a URL that directs you to http://www.mellanox.com/, you are then leaving HPE.com. Please follow the instructions on http://www.mellanox.com/ to download Mellanox software or documentation. When downloading the Mellanox software or documentation, you may be subject to Mellanox terms and conditions, including licensing terms, if any, provided on its website or otherwise. HPE is not responsible for your use of any software or documents that you download from http://www.mellanox.com/, except that HPE may provide a limited warranty for Mellanox software in accordance with the terms and conditions of your purchase of the HPE product or solution. - For a list of known issues with this release, refer to Chapter 1 (Release Notes), subsection 1.8 of 'Mellanox WinOF VPI Documentation' bundled with the driver download.

- Performance tuning guide can be obtained via the following download page:

- Topology guide can be obtained via the following download page:

- Mellanox InfiniBand configurator can be obtained via the following download page:

Revision HistoryVersion:5.50.52000 (14 May 2019) Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.52000: - An issue that resulted in a BSOD (Blue Screen Of Death) when changed the number of queues/CQ in VMMQ (Virtual Machine Multi-Queue).

- System crash occured while updating the VPort in the error flow.

- A race condition that occurred when sending RDMA-send-messages between the storage nodes and the compute nodes which resulted in RDMA (Remote Direct Memory Access) connectivity loss.

- Fixed an issue that prevented the NIC from enabling NVGRE (Network Virtualization using Generic Routing Encapsulation) or VXLAN (Virtual Extensible LAN) although they were enabled by the user.

- Fixed a BSOD issue that occasionally occurred when used a machine with more than 128 cores.

- The systems_snapshot tool used to hung when the ETL(Extract, Transform,Load) folder was not present.

- VMMQ: Fixed an issue that resulted in a BSOD due to an error when changing the number of queues/CQ.

- A race condition that occurred when simultaneously querying the Permon counters (the 'Mellanox Adapter Traffic Counters' and the 'Mellanox Adapter QoS Counters') and deleting the vPort OID, which resulted in BSOD.

- A rare issue that caused a deadlock between delete vPort and CheckForHang Routine.

New Features in Version 5.50.52000: - The package contains the following versions of components:

- Bus, eth, IPoIB and MUX drivers version is 5.50.14688.

- The CIM Provider version is 5.50.14688.

(19 Dec 2018) Upgrade Requirement:

Recommended - HPE recommends users update to this version at their earliest convenience. The following issues have been resolved in version 5.50.50010: - System would cease functioning when the vNic was detached from the VM during heavy traffic when in VMQVMMQ mode.

- RoCE (RDMA Over Converged Ethernet) connection failed occasionally when the Universal/Local (U/L) bit in the MAC was set to 1.

- Fixed an issue that caused the mlxtool PDDR (Port Diagnostic Database Register) tool to provide some inaccurate information for Infiniband links.

- Disabled the option to stop the uninstall process once the driver uninstallation process started.

- Networks with new Subnet Managers (OpenSM 4.7.0 and up) would drop malformed multicast-join packets issued by the driver. The driver now constructs the multicast join request correctly.

- In case the DSCP (Differentiated Service Code Point) values are lower than the max priority i.e: DSCP(4)- >Prio(0) when mapping the DSCP to a certain priority, the priority's value will be set the same as the DSCP's value.

- Driver would not load due to a race condition that existed between the resiliency flow and the FLR request when issuing an OID_SRIOV_RESET_VF request to reset a specified PCI Express (PCIe) Virtual Function (VF).

- The driver would reset the adapter as a result of a false alarm indicating that a receive queue was not processing.

- Fixed an issue that caused a Black Screen upon driver's removal due to extremely low memory conditions, when the memory allocations started to fail.

- The Memory Region (MR) was displayed as registered when it was not, thus prevented the user from accessing it. This incorrect status display of MR was a result of the ND function 'INDEndpoint' reporting error status when it returned from the underlying functions. This fix verifies that the user will receive the correct error status upon such scenario.

- MSI-X cores in Virtual Function was limited 8. This limit has been expanded to 128.

- BSOD (Blue Screen Of Death) occurred on servers with more than 64 cores because the Tx traffic did not honor the Tx affinity implied by the TSS, when the number of potential RSS CPUs was greater than 64.

- When the mlxtool dbg resources command executed, the FS_RULE quota number displayed instead of the 'Managed by PF' message.

- When setting the LogNumQp and LogNumRdmaRc registry settings to their maximum value, the WinOF bus driver failed to load.

- The 'TX Ring Is Full Packets' perfmon counter did not function properly on IPoIB.

- When installing the driver over Microsoft Windows Server 2012 R2 inbox driver, the LogNumQP parameter remained in the registry. Thus, a number of QPs were limited to 64K instead of 512K (the driver's default).

- Fixed an issue that caused a system crash when the interface connected to vSwitch was disabled and the operating system did not clean all VMQs(Virtual Machine Queue).

- Communication Manager would stop functioning while attempting to obtain ND/NDK (Network Direct Kernel) connection.

- Command failure and protection domain violation occurred when running the ND application.

- Mlxtool command, 'mlxtool dbg ipoib-ep []', reported partial results of the EndPoint list when there was a large number of endpoints.

- VM would stop functioning when restarting the PF drivers and their peers in the target machine. The VM had to be force-restarted to restore the functionality.

- Fixed an issue that caused a memory leak when RoCE was enabled.

- Set a wrong value to the *ReceiveBuffers key when it was restored to default.

- Fixed a crash that occurred when changing the Ethernet IP address while RDMA traffic was running.

- Fixed a crash that occurred on IPoIB driver stack.

- A BSOD that occurred when a memory allocation failed upon driver startup.

- The connection port numbers did not increase sequentially when running nd_*_bw application with multiple QPs.

- Tx traffic did not honor the Tx affinity implied by the TSS when the number of potential RSS CPUs was greater than 64.

- When the driver was installed over Microsoft Windows Server 2012 R2 inbox driver, the number of QPs was limited to 64K instead of 512K (the driver's default) because the LogNumQP parameter remained in the registry.

- Removing a PKey that was a part of an IPoIB team interface disabled the team and the option to delete it.

- An incorrect number of HCAs was returned from executing the Get-MlnxPCIDeviceSriovSetting command.

- The mlxtool dbg resources command failed to pull information about the last VF, and showed the PF as VF0.

- Using invalid parameters in mlxtool perfstat command resulted in infinite waiting time and results were not returned.

- The Get-MlnxPCIDeviceSriovSetting command failed on a server with more than one device, when one of the devices was disabled. Following the fix, the command returned results only for the devices that were enabled.

Changes and New Features in Version 5.50.50010: - Dump Me Now (DMN), a bus driver (mlx4_bus.sys) feature that generates dumps and traces from various components, including hardware, firmware and software, upon internally detected issues (by the resiliency sensors), user requests (mlxtool) or ND application requests via the extended Mellanox ND API. DMN is unsupported on VFs.

- Support for systems with up to 252 logical processors when Hyperthreading is enabled and up to 126 logical processors when Hyperthreading is disabled.

- RSC (Receive Segmnet Coalescing) solution in TCP/IP traffic to reduce CPU overhead.

- NDSPI to control CQ (Completion Queue) moderation.

- A new counter for packets with no destination resource.

- A new registry key that allows users to configure the E2E Congestion Control feature.

- Added to the vlan_config tool the ability to create VLANs for the Physical Function (PF) in addition to the Virtual Function (VF).

- Added support for VMQ(Virtual Machine Queue) over IPoIB in Windows Server 2016.

- Ability to collect firmware MST dumps in cases of system bug check.

- Added an event log message (ID 273) that is printed when the number of resources to load the VF is insufficient.

- A counter for the number of packets discarded due to an invalid QP (Queue Pair) number.

- DSCP(Differentiated Service Code Point) based counters to support traffic where no VLAN/priority is present.

- Added support for servers with more than 64 cores.

- Added support for Windows Server 2019.

- Modified the RSC (Receive Segment Coalescing) default mode when using Windows Server 2019. RSC is disabled by default in Windows Server 2019.

|

|

Legal Disclaimer: Products sold prior to the November 1, 2015 separation of Hewlett-Packard Company into Hewlett Packard Enterprise Company and HP Inc. may have older product names and model numbers that differ from current models. |

|